My post of 23 March 2023 noted that the UK Passport Office website included the “X-Clacks-Overhead” header referencing Sir Terry Pratchett’s “Clacks”, a technology in the fictional Disc World much like the telegraph (or internet) of our world.

I noticed today that the independent Passport Office website has now been swallowed by the all encompassing “gov.uk” mega site. A pity. Neither the gov.uk site itself, nor any other HMG website I can find, now appears to include the header. So, some humourless bureaucrat in the Cabinet Office (for I suspect the fault lies there) has decided that the reference is frivolous and unnecessary.

Wouldn’t have happened on my watch……

Permanent link to this article: https://baldric.net/2026/01/25/humourless-bureaucrats/

In October last year I posted about my email phishing attack against a group of my friends (at least, I think they are still my friends). That post was an attempt to describe some of the problems inherent in the way people trust the systems they use on a daily basis. People are naturally trusting, but unfortunately people also do dumb things. A lot.

Because people do dumb things, and because on-line systems are now so highly sophisticated and their usage is so deeply embedded in the way people do things on a day to day basis, many organisations (particularly in the finance sector) offer guidance and best practice advice on how people should protect themselves from on-line scams and attacks. All, but all, of that advice stresses that:

– you should not automatically trust an email, particularly if it comes from an unusual or unexpected source;

and

– you should be particularly suspicious of any text or email that gives a link to an unknown website or asks you to download an attachment or install a new app or package.

Continue reading

Permanent link to this article: https://baldric.net/2026/01/25/understand-your-customer/

A couple of weeks or so ago, an old friend from the bike club asked in our club messaging group why supposedly sophisticated companies (such as Jaguar Land Rover. M & S and the Co-op) were still so susceptible to “hacking attacks”. I gave a somewhat flippant reply along the lines of “because their security is crap”. I also muttered about “software monocultures”, over-reliance on single outside “managed IT service providers” and poor, or non-existent, internal expertise, auditing and monitoring.

However, on giving that reply some more thought, I concluded that a more full, and rounded reply might be necessary.

Continue reading

Permanent link to this article: https://baldric.net/2025/10/26/the-people-problem/

Yesterday, 10 October 2025, the Nobel Committee announced that it had awarded the 2025 Nobel Peace Prize to Maria Corina Machado, the Venezualan opposition leader. Machado had been forced into hiding following Venezuela’s presidential election in January 2025, when authorities loyal to Nicolas Maduro, the country’s autocratic leader, declared, despite all evidence to the contrary, that Maduro had won a third term in office.

In their anouncement, the Committee said:

In the past year, Ms Machado has been forced to live in hiding. Despite serious threats against her life, she has remained in the country, a choice that has inspired millions.

They went on to say:

When authoritarians seize power, it is crucial to recognise courageous defenders of freedom who rise and resist.

So, the mad, orange, man baby currently waging war against the US Constitution, and his own citizens, didn’t get his way. And the Nobel Committee managed to poke him with a stick whilst making its award.

But also yesterday, John Lodge died. So it wasn’t all good.

Permanent link to this article: https://baldric.net/2025/10/11/friday-10-october-2025/

But, not, it would seem, irony:

Following the (pardon me, unforgivably stupid) US strikes on three Iranian sites (Natanz, Isfahan and Fordo) last night, a statement from Russia’s foreign ministry said: “The irresponsible decision to subject the territory of a sovereign state to missile and bomb attacks, whatever the arguments it may be presented with, flagrantly violates international law, the Charter of the United Nations and the resolutions of the UN security council.”

“We call for an end to aggression and for increased efforts to create conditions for returning the situation to a political and diplomatic track.”

Anyone forgotten Ukraine?

(Well, at least the US didn’t use “tactical” nuclear weapons in the strikes, as had been trailed in earlier news. That is some small mercy.)

Permanent link to this article: https://baldric.net/2025/06/22/diplomacy-may-be-dead/

Well, this is fascinating.

Shortly after I posted the commentary below I received a connection from an IP address which belongs to cellcom.co.il and geolocates to Haifa in Israel. That IP address directly, and specifically, requested a copy of my post entitled “more dumb legislation” immediately followed by a request for a copy of my “robots.txt” control file. The user-agent identified the browser used as Firefox version 77 on Windows 10 – a highly generic, and possibly spurious user-agent.

The internet is a truly wonderful space.

Permanent link to this article: https://baldric.net/2025/02/27/%d7%a9%d7%9c%d7%95%d7%9d-%d7%95%d7%91%d7%a8%d7%95%d7%9b%d7%99%d7%9d-%d7%94%d7%91%d7%90%d7%99%d7%9d/

On Tuesday of this week (25 February 2025) every major print newspaper in the UK carried an identical front page under the heading “Make it FAIR”. They did this in order to raise awareness of the impending legislation (currently in “consultation”) intended to change the law in the UK to favour big tech platforms in order that they may use British creative’s copyrighted material to train their AI models without permission or compensatory payment.

This is plain stupid – particularly at a time when US politics is highly volatile and in thrall to the very same big tech companies which own these emergent AI technologies.

The print media coverage pointed to a campaign letter asking readers to contact their MPs to urge the UK Government to see some sense and block this pillage of creative content. As a (small) creative content author, albeit one who is not concerned about personal monetary reward, I read the campaign letter and decided that I should lobby my MP, Ben Goldsborough, in line with the campaigns aims.

What follows is my response to my MP’s boilerplate reply to my initial email.

Continue reading

Permanent link to this article: https://baldric.net/2025/02/27/more-dumb-legislation/

Well, not quite, but distinctly possible.

El Reg has continued comment on the lunacies of the OSA I reported on in my last post. The Reg reports that one Neil Brown, a Director of a UK law firm, believes that individuals who run their own websites (so that would include me) could be held liable for off-topic visitor-posted comments that break the UK’s Online Safety Act.

The Reg reports that “Brown believes individuals running websites could be held accountable for posts by third-parties that are unrelated (off-topic) to the content on that site (eg, an AI-generated explicit image posted to a bike blog).”

In my time as a ‘net user I have seen a huge number of small sites which do not properly moderate user comments and thus leave themselves open to spam ‘bot posts, often pointing to the sort of sites that could not be classed as “child friendly”. If Brown is correct in his belief (and he may be unless Ofcom can provide definitive guidance to the contrary) then those sites will in future potentially be open to prosecution for breach of the law.

My own approach to comments here on trivia has always been one of very tight moderation – I do not allow any comment until I have seen it and agreed to its publication. Probably 99.99% of all attempted comments here are from spam ‘bots (lately mostly trying to get links to “binance” or other dodgy financial sites published). I routinely delete all such comments from my moderation queue. That is the reason you see so few comments here (well, that and the fact that not many real people comment……)

However, until such time as Ofcom can clarify the position on the OSA’s applicability to sites such as trivia, I am stopping comments altogether. It won’t make much difference to readers of trivia because they won’t see any change, but it will reduce my need to moderate the site and will certainly make me safer. If approached by Ofcom in future, I can then honestly say “I do not allow comment at all”.

I do worry though about the impact on small sites such as that run by the owner of my favourite bike forum.

Permanent link to this article: https://baldric.net/2025/02/07/blog-comments-illegal-shock-horror/

I mentioned in my (off normal topic) post of 15 November last year, that I am a member of a motorcycle forum. I am also a member of other online fora, but the bike forum is the only one I really engage with often enough to care about this latest bit of legislative stupidity.

In March of this year, the first parts of the 2023 UK Online Safety Act will come into effect. The Act was, and is, designed to protect children and adults from potential harms online and creates new legal duties for the online platforms and apps affected.

As with much legislation, particularly that covering activity on the ‘net, there are some (probably) unintended consequences arising from the broad way in which the legislation was drafted, and approved.

Continue reading

Permanent link to this article: https://baldric.net/2025/02/01/unintended-consequences-or-dumb-legislation/

This is largely for my old friend Rob who sometimes chides me on the lack of activity here (and for missing trivia’s birthday – born 24 December 2006).

So trivia is now 18 years old (and sometimes feels older). I promise I’ll post more next year (he said).

And at this time of year my lady makes cakes for the boys for Christmas day. This one is this year’s chocolate feast.

Merry Christmas.

Permanent link to this article: https://baldric.net/2024/12/24/trivia-may-now-vote/

I am a member of an odd (very odd at times) motorcycle forum. That forum runs a “motorcycle picture of the week” competition. This week, the challenge, set by “Viator” was to show a picture of your bike “with something you have made”.

Now since I do not make much in the way of actual artefacts, and most of what I do “make” is somewhat intangible: – unnecessarily complicated home networks, VPNs, servers running esoteric bits of FLOSS etc. I initially ruled myself completely out of the running this week. (In fact I don’t often contribute at all). Then I thought, what about featuring my bike in something I make, this blog.

So here you are: my bike, this morning, on my blog. Nothing at all to do with any of the usual topics, but hey, it is my blog so I can post what I want.

Permanent link to this article: https://baldric.net/2024/11/15/and-now-for-something-completely-different/

This email just in from the tor project team.

From: gus

To: tor-relays@lists.torproject.org

Subject: [tor-relays] Update: Tor relays source IPs spoofed to mass-scan port 22

Date: Thu, 7 Nov 2024 15:49:37 -0300

Hello everyone,

I’m writing to share that the origin of the spoofed packets has been

identified and successfully shut down today, thanks to the assistance

from Andrew Morris at GreyNoise and anonymous contributors.

I want to give special thanks to the members of our community who have

dedicated their time and efforts to track down the perpetrators of this

attack.

Although this fake abuse incident had minimal impact on the network —

temporarily taking only a few relays offline — it has been a

frustrating issue for many relay operators. However, I want to reassure

everyone that this disruption had no effect on Tor users whatsoever.

We’re incredibly fortunate to have such a skilled and committed group of

relay operators standing with Tor.

Thank you all for your resilience, ongoing support and for making the

Tor network possible by running relays.

Gus

—

The Tor Project

Community Team Lead

_______________________________________________

tor-relays mailing list — tor-relays@lists.torproject.org

To unsubscribe send an email to tor-relays-leave@lists.torproject.org

And the tor project team now have a blog post about the spoofing attack.

So – some good news. Now perhaps we can all relax a bit more.

Permanent link to this article: https://baldric.net/2024/11/07/spoof-source-identified/

And may even be malicious.

I have been receiving “malicious activity” reports from my hosting ISP about my Tor node at “tor1.rlogin.net” since about the end of October. So far I have received five such reports. Each report takes the following form:

We have received an abuse report from abuse@watchdogcyberdefense.com for your IP address 95.216.198.252.

We are automatically forwarding this report on to you, for your information. You do not need to respond, but we do expect you to check it and to resolve any potential issues.

Please note that this is a notification only, you do not need to respond.

Kind regards

Abuse Team

Continue reading

Permanent link to this article: https://baldric.net/2024/11/06/watchdogcyberdefense-com-are-complete-bozos/

I have just discovered, shamefully late, that Ross Anderson died at his Cambridge home at the end of last month. He was only 67. Anderson was Professor of Security Engineering at the Cambridge University’s Department of Computer Science and Technology. He had worked at the University since the early 1990s.

Professor Anderson was famously rabidly anti spook, but more justifiably famous for his work in privacy and particularly in those aspects of computer security (or wider technology) which could impact on personal privacy.

I cannot pretend to have known Professor Anderson, but I had, and have, the greatest respect for his work. Anyone who can annoy certain parts of GCHQ as much as he did deserves commendation. Professor Anderson’s obituary can be found here. There are also good tributes by John Naughton and Bruce Schneier. There are plenty of others on-line should you care to search.

I am deeply sorry that I only found out about his passing from the latest “feisty duck” newsletter. My only excuse is that I don’t follow twitter, nor am I as active in following many of the security sources I used to read. One of the impacts of (long) retirement I fear.

RIP Professor.

Permanent link to this article: https://baldric.net/2024/04/30/ross-anderson/

Yesterday’s i newspaper lead with a report that SIS HQ at Vauxhall Cross could be overlooked from a flat in the new residential property built at St George Wharf. Said flat was reportedly purchased by Russians with links to a Soviet era property in Moscow which is roughly 300 metres away from the “Russian Intelligence chemical site” that developed Novichok.

The i report went on to say that Alicia Kearns, the Chair of the Parliamentary Foreign Affairs Committee, told the newspaper, “It’s no surprise that hostile states are buying up properties for surveillance purposes – but it’s the Government’s job to stop them.”

Let’s just hope that the Russians have never heard of IMSI catchers.

Permanent link to this article: https://baldric.net/2023/12/21/sis-troubles/

My last post described how to add a custom X-header to outgoing email in postfix. But of course this approach is rather a blunt instrument because it necessarily adds the header to all outbound mail which originates from my server. In my particular case that does not matter overmuch, because any and all mail accounts on that server are either mine, an administrative account, or a family member’s. But this approach would be no good for say, a corporate server (unless that Corporation had specifically agreed that approach).

Better by far if individual users could decide whether they wish to add the custom header to their local account(s). So the best place to add a header will be in the MUA, not the MTA as I had done. My MUA of choice is claws (for some reasons see “All email clients suck“). Like Steve Litt, the author of that post, I find claws the least sucky of all the mail clients I have tried (and I particularly abhor that bastardisation of standards which is inherent in HTML email in a bloody browser). Claws is fast, lightweight, standards based, handles my IMAPS mail connections to dovecot on my mail server admirably easily, allows me to keep all my email plain text based and does not down load any in-line images unless I tell it to.

Continue reading

Permanent link to this article: https://baldric.net/2023/03/23/custom-headers-in-claws-mail/

In my post last week about the X-Clacks-Overhead HTTP header I mentioned that I had added the header to my postfix configuration as outlined in the advice given at gnuterrypratchett.com. As it turns out that advice does not work exactly as I wanted.

Firstly, and most importantly, using the “header_checks” table is sub-optimal because it adds the header to both outgoing and incoming email. This has the effect that all mail coming in to me (or any of the multiple other email accounts handled by my postfix server) now contains the header so I can’t be sure whether or not the outside originating server added the header or it came from me.

Secondly, for our purposes, the “/^X-Clacks-Overhead:/ IGNORE” line in the sample header_checks file is redundant – all that is necessary is the instruction to postfix to prepend the “X-Clacks-Overhead:” line to the existing header of your choice.

A much better approach I have found is to use the “smtp_header_checks” instruction to postfix – that way the header is only added to mail leaving our server. The postfix manual says:

smtp_header_checks (default: empty)

Restricted header_checks(5) tables for the Postfix SMTP client. These tables are searched while mail is being delivered. Actions that change the delivery time or destination are not available.

Thus the instructions at gnuterryprachett.com would be improved if it said:

Step 1: Where to put it:

/etc/postfix/main.cf

Step 1: What to add to main.cf:

smtp_header_checks = regexp:/etc/postfix/smtp_header_checks

Step 2: Where to put it:

/etc/postfix/smtp_header_checks

Step 2: What to add to smtp_header_checks:

/^Subject:/i PREPEND X-Clacks-Overhead: GNU Terry Pratchett

You could prepend the text to any existing header which you are sure will appear in the outgoing email. I chose “Subject” because all of my outgoing email has that header. Of course if I leave the Subject field empty in any email, then the X-Clacks_Overhead header will not be added.

I’ve emailed the admin of gnuterrypratchett.com.

Permanent link to this article: https://baldric.net/2023/03/14/postfix-x-headers/

Sadly, over the last few posts I have received way too many attempted porn links as comments. They don’t reach the public face of trivia because of my comment policy, but they are becoming tiresome in the extreme and I have to edit the damned things so I have (hopefully temporarily) turned off all comments.

Permanent link to this article: https://baldric.net/2023/03/10/comment-block/

For some years now I have included the “X-Clacks-Overhead” header in trivia’s lighttpd.conf as a tribute to the late great Sir Terry Pratchett. I am a huge fan of Pratchett’s Discworld series. You may not see the header when you browse trivia, but it is there. Users of linux based systems can easily inspect the headers using curl (“curl -I https://baldric.net” will list them for you).

The header in question is a reference to the “Clacks” which is a technology in the Discworld much like the telegraph (or internet) of our world. The name comes from the noise that the technology made in use (it “clacked”). The rather lovely xclacksoverhead website gives a nice explanation of the origins of the header.

It was whilst visiting that site today that I discovered that there appear to be over 1300 websites out there which similarly include the X-Clacks-Overhead header, including Debian, Mozilla and, strikingly, the UK Passport Office. It is good to see that even officialdom can show support, albeit in a non-obvious way. I salute the unknown sysadmin for the passport office site.

Continue reading

Permanent link to this article: https://baldric.net/2023/03/09/x-clacks-overhead/

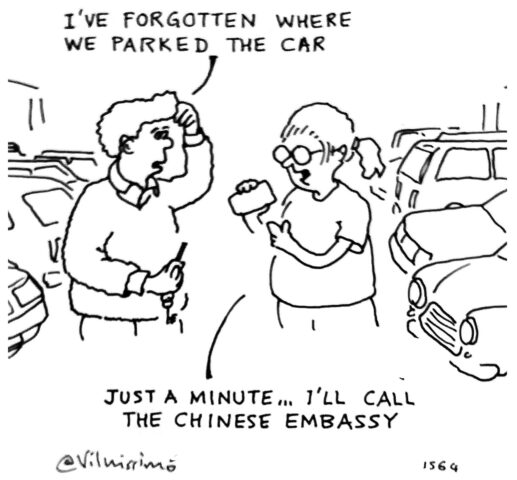

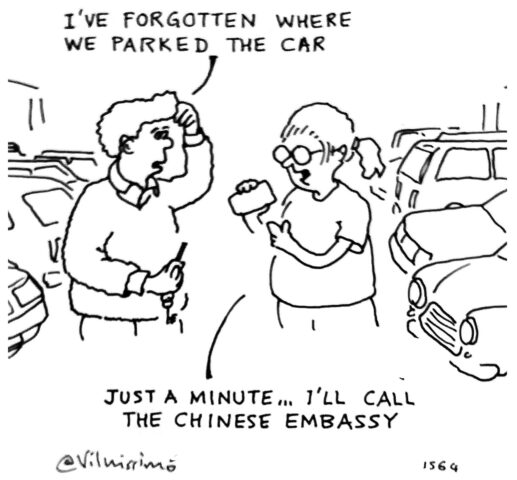

Last month I posted an article about the press reports of chinese software and hardware “found” in cars and how that could lead to the cars being tracked by the chinese state (or other hostile agencies). I was therefore delighted to see the cartoon below in issue 1591 of Private eye.

I am indebted to both Private Eye, and the cartoonist Vilnis Vesma for their kind permission to allow me to repost that cartoon here.

Enjoy.

Permanent link to this article: https://baldric.net/2023/02/19/lost-car/

In my last post, an ex GCHQ staffer is quoted as saying:

“If you’re stepping back a bit and saying what cars do park outside GCHQ or somewhere like Porton Down then you have the pool of information there if you ever need it.”

which got me wondering about how secure existing protective measures around the use of mobile ‘phones in and around some sites actually are.

For fairly obvious reasons, secure sites already require that visitors (or staff) leave all mobile communication devices, laptops or any electronic device capable of making audio or video recordings, in shielded lockers on entry. Mobiles must be switched off before being locked away.

Now what is the first thing anyone does when picking up their switched off mobile on leaving? What is the first thing you do when landing at a holiday resort when you have had your ‘phone switched off for the flight?

You turn it back on.

So, any hostile agency capable of locating something like an IMSI catcher aka Stingray anywhere near such a site would have the ability to capture the details of any mobile device when it was switched back on as the owner was leaving the secure location. That gives the attacker a veritable trove of information about who goes to such sites, how often, and how long they stay there.

Let’s just hope that all “secure” sites are completely secured and isolated from IMSI catchers and any other nefarious form of mobile surveillance technology. And of course, we must hope that anyone actually driving to, and parking at, such a site has such an old vehicle that it does not contain any “connected car” telemetry capability.

Permanent link to this article: https://baldric.net/2023/01/16/mobile-insecurity/

Some parts of the UK press have been reporting recently on the “discovery” of “hidden Chinese tracking devices” in a UK Government car (the original inews report is behind a paywall).

The reports quote a “serving member of the British intelligence community” as telling the i newspaper: “It [the tracking SIM] gives the ability to survey government over a period of months and years, constantly filing movements, constantly building up a rich picture of activity. You can do it slowly and methodically over a very, very long time. That’s the vulnerability.”

Furthermore, the report goes on to say that “a former GCHQ analyst told the paper that it was unlikely that this was a targeted operation focussing on a single politician but rather represented a broad data mining approach by the Chinese Communist Party.”

(Sensible comment from a GCHQ staffer.)

Continue reading

Permanent link to this article: https://baldric.net/2023/01/16/brakes-as-a-service/

I use signal as my instant messenger app on my ‘phone and I have the desktop version installed on my, well, desktop. Signal was written by the kind of people I trust and in my view it is infinitely better than plain unencrypted SMS and much better than any of the alternative IMs around (whatsapp, telegram, imessage, google messages et al.) Like most modern messaging apps, signal gives you one to one messaging, group messaging, video calling and image messaging, and it does it privately and securely. What more could a privacy nut want?

A couple of days ago my old friend Rob sent me a signal message reminding me (yet again) that I had failed to make any comment on trivia’s birthday. In fact, during 2022 I have made the sum total of three (four if you count this one) posts. My bad. All I can say is that I have been busy. As I told Rob in my initial reply I have a bunch of things I want to post about, but right now, none of them are so particularly pressing that I feel the need to hit the keyboard. Because we don’t correspond that frequently, my conversation with Rob strayed off onto a variety of topics (motorcycles, computer backup strategies, the ever expanding usage of consultants in Government for example). Now, at the time I was conversing with Rob, I was also in the middle of a separate thread with my old chums from the bike club, also on signal. But the conversation with Rob was on my desktop whilst the one with the bike club was on my ‘phone. I think you can guess what happened next.

Yep, I posted a note to Rob that should have gone to the bike club. Fortunately Rob agreed with the contents of my inadvertant post, but it could have gone horribly wrong.

As Rob said, the biggest failure in any secure system is the human element. All the security in the world can’t help you if you are an idiot.

A (belated) Merry Christmas to my readers and a Happy New Year. May 2023 bring you all that you could wish for.

Permanent link to this article: https://baldric.net/2022/12/30/signal-failure/

Way back in February of this year when I concluded my rant about systemd I said:

“Given that Ubuntu is tied closely to systemd and will be implementing the ridiculous systemd-homed.service shortly, and that Mint is based on Ubuntu and will perforce probably follow, I have now given up on Mint and moved to another distro which will not do so. In my next post I will discuss which distro, and why I chose it.”

Well, my apologies for the delay in writing this. All I can say is, “I’ve been busy”.

Continue reading

Permanent link to this article: https://baldric.net/2022/10/09/systemd-free/